Hi there Jason! Can you briefly introduce yourself?

I'm a Consultant in Digital Marketing and SEO, and I am currently traveling the world as a digital nomad. Traveling from conference to conference, living in hotels.

You may have heard about my #SEOisAEO podcast (opens in a new tab) where I talk to really smart people about their specialist subjects. It's really exciting because they teach me so much. They're 15-30 minute interviews where I ask them to share with me, and the community, something that they find incredibly important in their specific field. The ambiance is really relaxed, and yet the content is hugely interesting and useful. David Amerland suggests that it is "The most fun you'll ever have learning about digital from the experts".

If you had to pick a favorite topic in SEO, what would it be?

Knowledge graphs!

I talk a lot about knowledge graphs because 5 years ago I started working on what shows up when I typed my name into Google. Nowadays, what comes up when you type in "Jason Barnard" is more or less what I want to come up. Whether you are a brand or a person, Brand SERPs is something you should definitely be looking at and working on right now, since it turns out (luckily for me) to be the key to your presence in Google's Knowledge Graph.

Can you briefly explain what the Knowledge Graph is?

Most simply put, a knowledge graph is an encyclopedia that's readable by machines. So it's basically knowledge organized in a manner that a machine can easily understand and extract information from. Technically speaking, you're looking at graph theory - nodes, edges and attributes. More simply and more practically in our industry, we are looking at knowledge graphs where you've got entities with attributes and relationships to other entities (also with attributes).

Google's knowledge graph is called The Knowledge Graph and the aim is to answer questions for its users by analyzing what the words in a query actually mean, rather than simply analyzing strings of characters. So nowadays it's about things, not strings.

The simplest explanation of a knowledge graph… You perhaps don't realize it, but that's actually what your mind is: a big knowledge graph - 'things' within context that your brain links together by their relationships. Google may well eventually do a better job than even our brains because it's not going to 'forget'. Presumably, once it gets it right it won't get it wrong again whereas a human being forgets lots of things and will make mistakes. It's essentially super human knowledge, which is very exciting.

If you want to get an idea of how fast this is evolving, look at rich elements in the SERPs. Every few months a new SERP feature rolls out, and usually it's related to the Knowledge Graph.

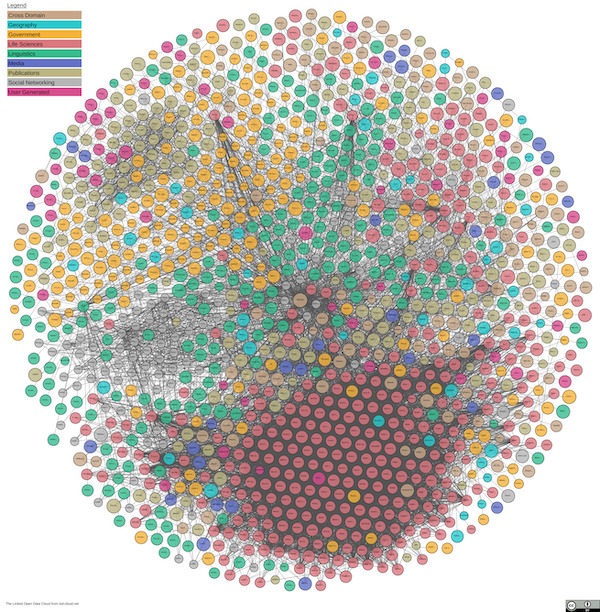

Also, let's not forget the many other companies building sizable knowledge graphs: Amazon, Apple, Facebook, Microsoft, Diffbot and the enormous advances being made with an openly accessible data set called The Linked Open Data Cloud (opens in a new tab).

Quick note: Google's Knowledge Graph (note the capital letters making it a proper noun) is just one example of a knowledge graph (no capital letters making it a common noun). There are hundreds of thousands (millions?) of knowledge graphs out there. In this chat I am mostly talking about Google's, since that affects the search industry the most right now.

What do you see when you read between the lines?

Rich SERP elements show a lot about how Google is functioning, what it's trying to do, how it's doing it and what they know about entities.

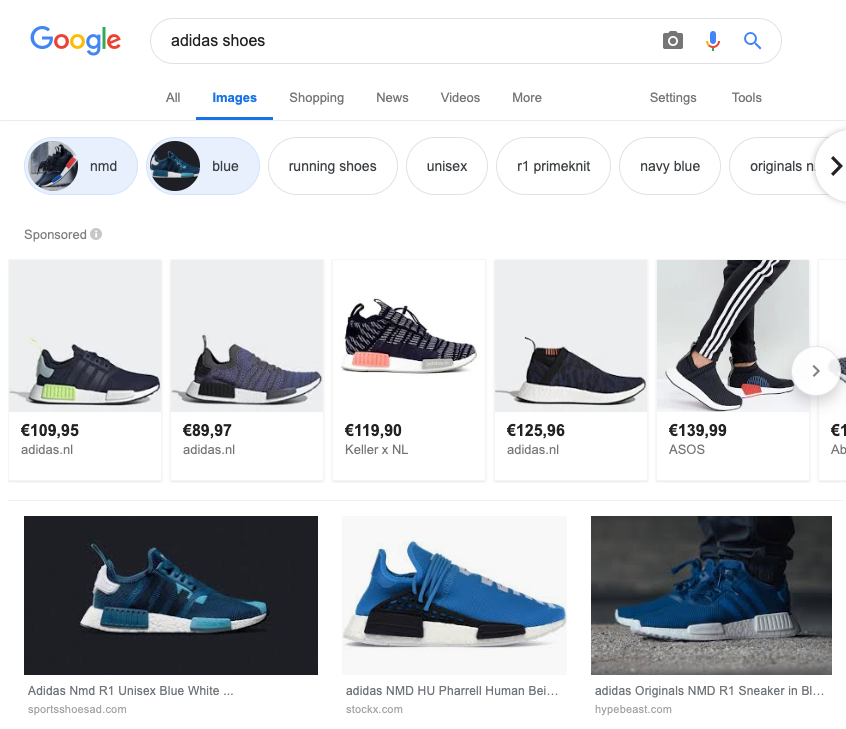

Take for instance the filtered navigation in images search which allows you to dig through the images by sub topic / context. That is seemingly simple, but very powerful and clearly indicates a certain level of understanding. Take a look at SERP features like related products, related topics, people also ask. They are all growing fast both in how visible Google allows them to be, but also in the accuracy and relevance of what they are showing.

Keep an eye on developments in the Knowledge Graph to stay ahead of the curve. Follow people like Bill Slawski (opens in a new tab), Andrea Volpini (opens in a new tab), or David Harry (opens in a new tab) to be at the cutting edge 🙂

Is Google Maps a knowledge graph?

Yes, it sure is! Google Maps is an example of a functioning knowledge graph that understands a vast number of entities their attributes and relationships between them and can therefore resolve previously unseen geospatial queries in real-time.

If you want to know where search /answer engines are going, where it's going to be in four to five years from now, then look at Google Maps and see how it's functioning and you get a very clear picture.

The example I would be using is searching for a coffee shop (Starbucks in this case) when you're standing on the street on a particular day at a particular time. It will tell you what the best route to the coffee shop on foot, or by car… it'll give you a different route taking into account both your means of transport and factoring in traffic jams as well. So it is linking me (a specific entity) to the coffee shop (a specific entity) with a relationship (how to get there) in a specific context (car, boat, walk…)

That does make you think: every query we make on Google is unique and is aimed at linking two entities together with a pertinent and optimal relationship.

Google Maps illustrates today what the future of search looks like. Look at Google Maps today, to get a very good foothold in what the world of search / answer engines will look like tomorrow.

Why is this happening now?

The idea of building computer-driven knowledge graphs has been around since the 60s. But technology held us back. Without large scale and reliable data storage, database queries and machine learning, building and maintaining a knowledge graph at the scale Google needs was simply not possible.

Over the last 15 years, five major technology advancements have emerged and have made industrial scale Machine Learning a reality: Big Data, NoSQL, BigQuery, Data Rivers and ASIC chips. Google's dream has come true.

Without Machine Learning technology, Google's Knowledge Graph wouldn't be the same. It allows Google (and other companies building knowledge graphs) to move away from their reliance on human curated data and facts (think Wikipedia, Wikidata, Crunchbase etc.) and into a world where machines can collect and validate facts (semi) autonomously by extracting information from the web.

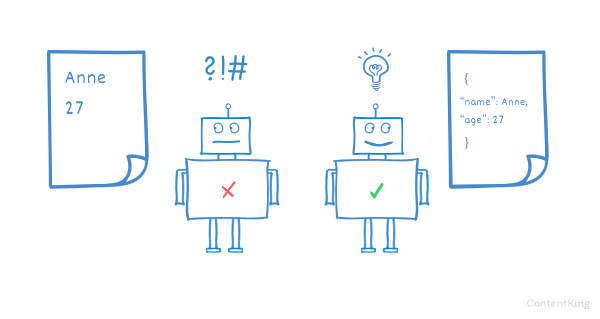

How can we help Google extract from our sites?

Today (and for several years to come), it is vitally important to help Google understand your content by using:

- Semantic HTML5 structure (including proper use of Headings)

- Structuring your content using tables (don't use them for design!), ordered and unordered lists

- Intelligent use of Semantic Triples in your copy

- Implementing lots and lots of structured data (principally Schema.org)

Oh, and don't say "Schema" if you mean "Schema.org", because otherwise Aaron Bradley (opens in a new tab) gets angry 🙂

Don't let unexpected Schema.org changes hurt your SEO performance. Be notified immediately!

It's interesting to see that Google is now employing hundreds of WordPress developers whose role is to contribute to WordPress community. Their principle aims are to improve speed (pushing AMP), reduce plugin bloat (TIDE), adding tools to monitor performance, encourage progressive themes (PWA), improve Gutenberg (and encourage adoption).

In the context of helping Google understand your content better, if you have a WordPress site, then switching to Gutenberg is essential, adding AMP is highly effective and adopting a progressive theme developed by them would be a good move in 2020.

So…are we building websites for Google?

In a way, yes. Martha van Berkel (opens in a new tab) said Google is the primary consumer of your content. Google consumes it, then presents it to users. If Google has trouble consuming (and cannot properly digest) it, they will be unable or unwilling to show it to the users. At which point, Google becomes your only consumer. And this situation is perhaps more common than we care to admit - ahrefs suggest that over 90% of content never gets traffic from Google (although Google crawls it).

Obviously, write appealingly for your human audience. No need to change that. However, if you want Google to present your content to its users / audience, you really DO need to ensure Google can consume and understand your content (see above for the 4 main methods)!

Where does Bing stand in all this?

Good question – Martha van Berkel suggests that Bing's knowledge graph is actually better than Google's. It is impossible to quantify, but seems plausible when you compare the results across the two. For example (VERY anecdotal), Bing is more ambitious / confident on real time data - it includes a list of upcoming concerts for William Shatner on its knowledge panel, whereas Google do not.

How do you get into knowledge graphs?

As a rule of thumb: according to Andrea Volpini, both Bing and Google appear to need about 20 to 30 confirmations of coherent consistent information from trusted sources before including information in their knowledge graphs. For information to be confirmed as a fact, it needs to be corroborated by multiple sources (found in lots of different places online) and be consistent across them (similar to NAPs in local search).

It is important to bear in mind that Google is looking for trusted sources, and those are not necessarily those with a high PageRank… IMDB, the Guardian newspaper and Wikipedia are highly trusted sources, whereas gossip sites are not. Xin Luna Dong (ex-Google, now Amazon) coined the concept of trust-based knowledge.

Something I believe - but have no proof of - is that once you've shown yourself to be truthful, trustworthy and reliable over time, Google will start to treat you as a trusted source (at least about yourself), and therefore require significantly less corroboration.

What should we do?

Start with your own website. And start there by explaining who you are with Schema.org markup on your "About us" page. Also consider rewriting the content to be more explicit, using semantic triples, add HTML lists / tables (if appropriate) and invest in some structural semantic HTML5.

Then move onto your blog posts, category pages, product pages and services pages… be clear, be accurate, use Schema.org, semantic triples, semantic HTML5… and be consistent with both structure and content.

At this point, you have explained to Google who you are and what you do / what you have to offer. Now you need to prove that you are telling the truth! So, whilst you are doing all this onsite work, set up a strategy to ensure the information you provide about yourself on your own website is corroborated on multiple trusted and pertinent sources across the web. Importantly, it is a good idea to link to the corroborations from your own pages since this makes it easier for Google to make the connection. Yes, I am telling you to link out !

Remember, you can write whatever you want on your site, but that doesn't make it true. To convince Google that you are telling the truth, you MUST provide evidence to back it up.

How important is the Knowledge Graph for your average SMB?

It is vitally important that all businesses communicate to Google who they are and what they do, starting today.

Even if the return on investment is not immediate, the losses further down the line make it a no-brainer. As traditional search diminishes in importance and other alternatives grow any SMB that is not fully understood by Google, Bing, Amazon etc, will struggle. If you are unconvinced, read up on these topics: voice search, no-click SERPs, Google Discover, Google For You, smart appliances…

Are there any downsides helping Google understand your content for content publishers?

Sure, it's not beneficial for everyone. Businesses that are purely information-providers are going to need to rethink their business models (they should really already be doing this !). News sites, directories, price comparison engines, dictionaries, and many others are seeing traffic decrease as Google increasingly shows SERPs that contain the information directly. Clicking through to the site is no longer necessary (or desirable).

eCommerce sites can arguably be more sanguine. Cindy Krum (opens in a new tab) suggests that if you are selling products, you have every interest in feeding Google reliable and accurate information about your products and services. You need to adapt, though. Cover your bases by pushing all that information out to Amazon, Apple, Bing et al.

Andrea Volpini gives some very sage advice: "Create your own internal knowledge graph. That allows you to take control of your data and choose what you share, how you share it and with whom".

Don't let unexpected Schema.org changes hurt your SEO performance. Be notified immediately!

Learn more about Knowledge Graphs

Resources:

- Subscribe to Jason's podcast

#SEOisAEO Digital Marketing Podcast with Jason Barnard (opens in a new tab)

(The most fun you'll ever have learning about digital from the experts) - Jason's slides of his YoastCon talk: The Knowledge Graph. What is it? How does it work? How do you get in? (opens in a new tab)

- https://schema.org (opens in a new tab)

- Stuctured Data through Schema.org (opens in a new tab)

People to follow on social media:

- Aaron Bradley (opens in a new tab)

- Andrea Volpini (opens in a new tab)

- Bill Slawski (opens in a new tab)

- Cindy Krum (opens in a new tab)

- David Harry (opens in a new tab)

- Dawn Anderson (opens in a new tab)

- Martha van Berkel (opens in a new tab)

- Xin Luna Dong (opens in a new tab)

Continue reading in-depth interviews with SEO specialists

You can check out our previous editions of SEO in Focus here:

- March 2019:

How real-time data changes the way we do SEO w/ Gerry White - February 2019:

Getting Things Done with Edge SEO w/ Dan Taylor - January 2019:

Why Your SEO Process Needs To Be Agile w/ Kevin Indig - December 2018:

Why Crawler Traps Can Seriously Hurt Your SEO w/ Dawn Anderson