Discovered - currently not indexed: what does it mean and how to fix?

The Discovered - currently not indexed status means that Google knows about these URLs, but they haven't crawled (and therefore indexed) them yet.

If you're running a small website (below 10.000 pages) with good quality content, this URL state is will automatically resolve after Google's crawled the URLs.

If you're running a small website and you keep getting this status for new pages, then evaluate the quality of your content — it could be that Google doesn't think it's not worthy of their time. The confusing thing about this status is that any content quality issues aren't limited to the listed URLs, it could be a site-wide issue.

Check out what John Mueller said in this English Google SEO office-hours on August 13, 2021:

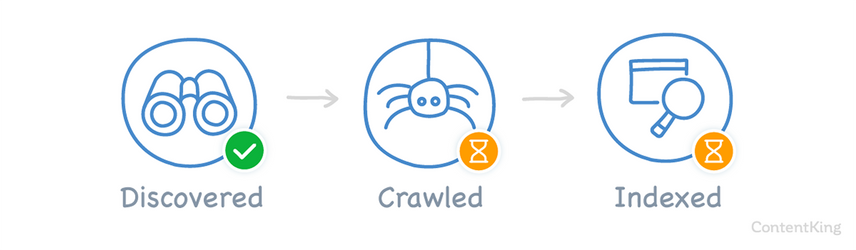

To illustrate, when you see the "Discovered - currently not indexed" status, this is where the URLs are in Google’s indexing process:

What causes this status

If you're encountering this issue on larger websites (10.000+ pages), this may be caused by:

- Overloaded server: Google was having issues crawling your site because it appeared to be overloaded. Check with your hosting provider if this was the case.

- Content overload: Your website contains much more content than Google's willing to crawl at the moment. They think it's not worth their time. Examples of content that fit this bill: filtered product category pages, auto-generated content and user-generated content. You can fix this either by pruning content, making the content more unique if you want Google to crawl and index it, or by removing links to it and update your robots.txt file to prevent Google from accessing these URLs if you're finding Google is discovering content that they shouldn't.

- Poor internal link structure: Google isn't finding enough ways in to the content that's still due to be crawled. This can be fixed by improving the internal link structure.

- Poor content quality: Improve the quality of your content. Create content that's unique and that adds value to your visitors. Satisfy their user-intent. Give them what they want. Help them solve problems.

Please note that issue 1 and 2 are classic examples of crawl budget issues. Especially for larger websites, this is an area of concern.